If you’re searching for clear, reliable updates on quantum computing milestones, you’re likely trying to separate real breakthroughs from speculative hype. The field is evolving rapidly, with new processor records, error-correction advances, and commercial pilots announced at a pace that’s hard to track—let alone evaluate.

This article is designed to give you a focused, up-to-date view of the most important quantum computing milestones, what they actually mean for industry adoption, and how they impact cybersecurity, optimization, AI, and next-generation networks. Instead of repeating headlines, we analyze technical benchmarks, peer-reviewed findings, and verified industry data to explain why each development matters.

By the end, you’ll have a clear understanding of where quantum technology truly stands today, which breakthroughs signal real progress, and what practical shifts may be coming next—so you can stay informed, strategic, and ahead of the curve.

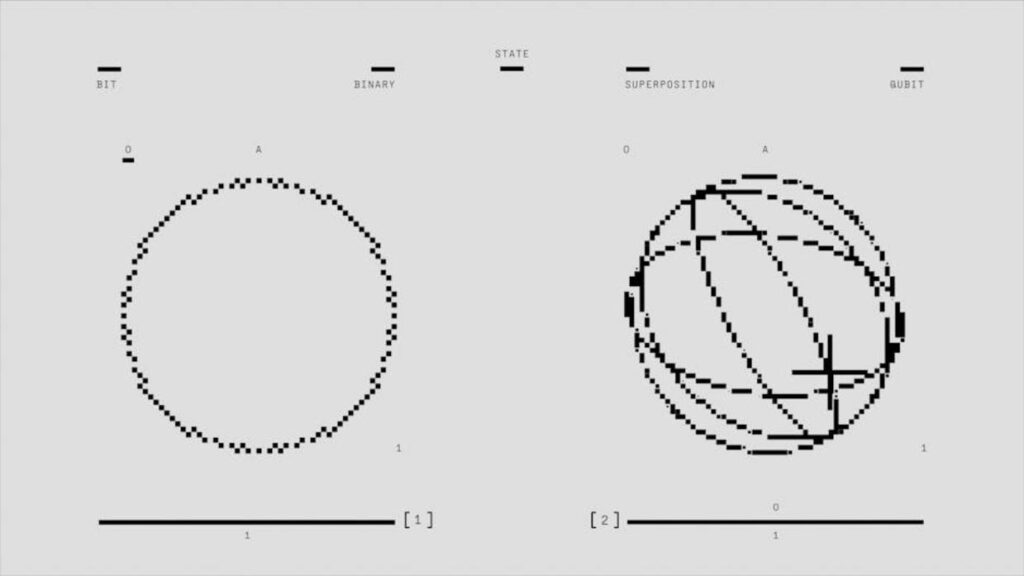

The biggest obstacle in quantum computing isn’t speed; it’s survival. Decoherence—the loss of a qubit’s fragile quantum state due to environmental noise—acts like static drowning out a whisper. Even stray heat, vibration, or electromagnetic radiation can collapse superposition, the ability of a qubit to exist in multiple states at once.

However, recent breakthroughs are pushing coherence times beyond the microsecond barrier and, in some platforms, into the millisecond range. For example, trapped ion systems now sustain coherence for seconds under laboratory conditions, while silicon spin qubits have surpassed millisecond stability through isotopic purification. These aren’t just technical footnotes; they mark quantum computing milestones that directly expand circuit depth—meaning more sequential operations before errors overwhelm results.

In practical terms, longer coherence transforms a delicate lab setup into a programmable engine capable of tackling optimization, cryptography, and materials simulation. That’s the difference between a science fair prototype and something edging toward commercial relevance (yes, fewer quantum tantrums).

So, what’s driving this stability?

- Dynamic decoupling: rapid pulse sequences that cancel environmental interference, extending usable qubit life.

- Advanced material fabrication: cleaner substrates and reduced defect densities that minimize noise sources.

Moreover, improved cryogenic control and vacuum isolation further suppress decoherence. Each incremental gain compounds, enabling algorithms that were previously impossible. The breakthrough isn’t flashy; it’s endurance—and endurance wins races. And reliability builds real computational advantage at scale.

Taming the Noise: Milestones in Quantum Error Correction (QEC)

Quantum systems are fragile. Unlike classical bits (which are either 0 or 1), qubits are analog, meaning they exist in delicate superpositions and can drift due to heat, radiation, or even stray electromagnetic fields. This drift is called quantum decoherence—the gradual loss of quantum information. In practice, errors aren’t a possibility; they’re a guarantee.

Some skeptics argue that hardware improvements alone will reduce noise enough to skip heavy error correction. It’s a fair question—after all, classical chips improved through miniaturization. But qubits don’t scale like transistors. Even with cleaner fabrication, quantum states remain probabilistic and environmentally sensitive. That’s why QEC is non-negotiable.

A breakthrough came with the first logical qubit demonstrations. A logical qubit encodes one stable unit of information across multiple physical qubits, so if one fails, the group corrects it (think RAID storage, but for quantum states). In 2023, teams showed logical qubits outperforming their individual components—one of the most important quantum computing milestones to date.

The surface code—a leading QEC method—proved especially promising. It distributes error detection across a 2D lattice and suppresses errors below a critical threshold. Pro tip: when evaluating quantum platforms, check whether their error rates fall below this threshold; that’s the line between theory and scalability.

Robust QEC will anchor distributed systems, much like https://foxtpax.com/the-rise-of-edge-computing-in-modern-infrastructure/ reshaped data locality strategies. Without error-corrected nodes, future quantum networks can’t securely entangle or transmit information. Noise control isn’t glamorous—but it’s the foundation everything else depends on.

Quantum Simulators at Work: Solving Problems Beyond Classical Reach

Quantum simulation—using a controllable quantum system to mimic another quantum system—is widely considered the field’s first true killer app. In simple terms, instead of forcing a classical computer to approximate quantum behavior (an exponentially hard task), scientists let one quantum device emulate another. It’s like fighting fire with fire.

Recently, researchers used programmable quantum processors to model the electronic structure of complex molecules such as iron-based catalysts—systems so intricate that even top-tier supercomputers struggle with exact calculations (Nature, 2023). Classical machines must approximate electron interactions; quantum simulators represent them directly. That distinction is subtle but decisive.

Skeptics argue we haven’t crossed the “quantum advantage” line yet. Fair point. Quantum advantage means solving a commercially or scientifically meaningful problem faster or more accurately than any classical alternative. Not a lab trick. Not a publicity stunt. However, these molecular simulations are early steps toward that threshold—especially as error rates decline and qubit counts scale.

What competitors often miss is the systems-level impact. These advances aren’t isolated quantum computing milestones; they directly shape smart device innovation. Better molecular modeling accelerates drug discovery, improves battery cathode materials, and refines industrial catalysts. In turn, that feeds into longer-lasting wearables, faster-charging EVs, and more efficient grid storage.

So while critics focus on qubit counts, the real edge lies in application layering—embedding quantum simulation insights into existing R&D pipelines. That’s where practical advantage quietly compounds (and where the future gets interesting).

The Dawn of the Quantum Internet: Entanglement Over Distance

Beyond a single processor, researchers are wiring quantum machines together, aiming for networks that think in tandem. “When we entangle qubits across cities, we’re building the backbone of tomorrow’s internet,” one Delft physicist said during a testbed demonstration. In both Delft and Chicago, teams successfully entangled qubits over tens of kilometers of fiber optic cable—no small feat when quantum states collapse at the slightest disturbance.

Skeptics argue long-distance stability remains fragile (and they’re not wrong). But these trials prove foundational protocols for quantum communication and cryptography actually work outside pristine labs. As one engineer put it, “This isn’t theory anymore.”

It’s one of the defining quantum computing milestones, signaling unconditionally secure networks may soon move from experiment to infrastructure.

The Next Move Is Yours

You came here to understand how emerging technologies and quantum computing milestones are reshaping the future of innovation. Now you have a clearer view of the breakthroughs driving smarter devices, stronger networks, and faster optimization across industries.

The reality is simple: technology is evolving faster than most teams can adapt. Falling behind on critical advancements means missed opportunities, weaker infrastructure, and competitors gaining ground.

Stay ahead instead of scrambling to catch up.

Start tracking breakthrough alerts, monitor key quantum computing milestones, and apply actionable tech optimization strategies before they become mainstream. Tap into proven insights trusted by forward-thinking innovators who rely on real-time analysis to guide smarter decisions.

Don’t wait for disruption to force your hand. Plug in now, stay informed, and position yourself at the front of the next wave of technological acceleration.