Technology is evolving at a pace that makes yesterday’s breakthrough feel outdated by morning. If you’re searching for clear, actionable insights on innovation alerts, Pax tech concepts, smart device advancements, and network architecture shifts, this article is built for you. We focus on what actually matters—how emerging tools, distributed computing concepts, and optimization strategies are reshaping performance, scalability, and everyday tech experiences.

The challenge isn’t access to information. It’s filtering signal from noise. New frameworks, smarter devices, and increasingly complex infrastructures demand a deeper understanding of how systems connect, adapt, and scale in real-world environments.

This piece cuts through the hype. Drawing from hands-on analysis of evolving network architectures and performance optimization trends, we break down the most relevant developments and explain how they translate into practical advantages. Whether you’re refining infrastructure, exploring Pax innovations, or optimizing smart ecosystems, you’ll find focused insights designed to help you stay ahead—strategically and technically.

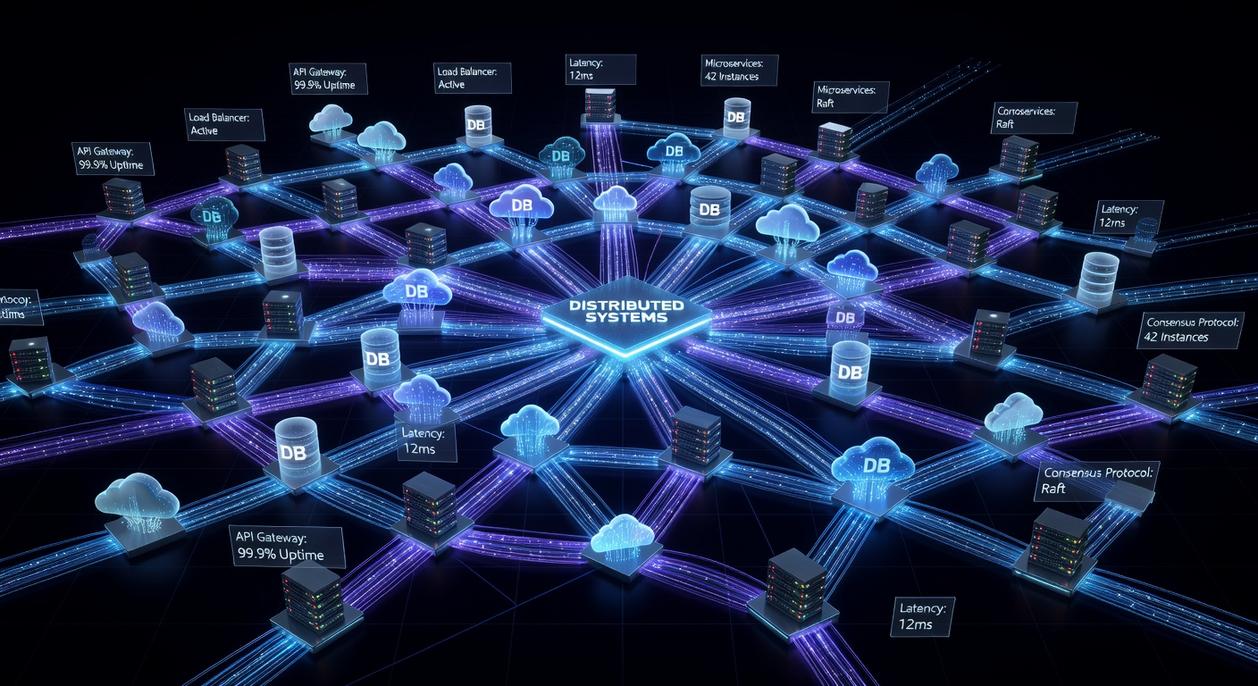

Last night, I tapped my phone to stream a show and check my bank balance, and it struck me how invisible the machinery really is. Every swipe triggers a symphony of machines scattered across the globe. The core problem is simple to ask, hard to solve: how do hundreds or thousands of independent computers behave like one reliable, lightning-fast brain? When one fails, another must respond instantly (no dramatic outage allowed). This is where distributed computing concepts step in—fault tolerance, scalability, redundancy. I’ll break these architectural rules down clearly, so the modern internet finally makes intuitive sense. For you today.

Principle 1: Scaling on Demand for Infinite Capacity

Let’s start with scalability. In simple terms, scalability is a system’s ability to handle growth without falling apart like a folding chair at a family reunion. There are two main approaches:

- Vertical scaling: upgrading to a bigger machine (more CPU, more RAM, more power).

- Horizontal scaling: adding more machines to share the workload.

Vertical scaling is like buying a monster truck to deliver pizzas. Impressive, but limited. There’s always a ceiling. Horizontal scaling, on the other hand, is why modern distributed systems thrive. You just keep adding servers as demand increases.

Now enter elasticity—the ability to automatically add or remove resources in real time based on demand. Instead of guessing how much capacity you’ll need (and praying you guessed right), elastic systems adjust on the fly. No over-provisioning. No crashing when traffic spikes. Everyone stays calm.

Picture a retail site during Black Friday. At 2 a.m., it serves a hundred shoppers. By noon, a million. Elastic infrastructure scales up seamlessly, then scales back down after the frenzy. Magic? Not quite. Just smart engineering.

Here’s the tech optimization hack: load balancers. Think of them as traffic cops directing incoming requests across servers. They ensure no single machine gets overwhelmed while others sip coffee.

Pro tip: Monitor response times, not just server count, when tuning distributed computing concepts for real-world performance. Because what’s the point of infinite capacity if users still wait?

Principle 2: Designing for Failure with Fault Tolerance

In large-scale systems, failure isn’t a possibility—it’s a statistical certainty. Hardware overheats in Phoenix data centers. Fiber gets cut during roadwork in Chicago. Cloud instances in us-east-1 occasionally time out. Something will break. Fault tolerance means designing so users barely notice when it does.

Some engineers argue that modern infrastructure is reliable enough that heavy redundancy is overkill (and expensive). Why duplicate servers across regions when uptime averages 99.9%? Because 0.1% downtime for a payment processor during Black Friday isn’t theoretical—it’s lost revenue. Fault tolerance assumes failure and builds around it.

Redundancy is the frontline defense. Data is replicated across multiple machines and geographic regions. If one node crashes, another steps in automatically. Load balancers reroute traffic. Databases mirror writes. This is foundational in distributed computing concepts, where resilience outweighs perfection.

But redundancy alone isn’t enough. Systems need agreement. Consensus algorithms like Paxos or Raft ensure a cluster of servers can agree on a single source of truth—even if some nodes are slow, partitioned, or offline. Think of it as a digital jury deliberating until a majority rules (minus the courtroom drama).

Now look at IoT networks—smart meters in rural grids, warehouse sensors in Rotterdam, or agricultural devices across Iowa farmland. Connectivity drops. Devices sleep to save power. The network must function despite these “expected failures.”

Understanding how apis enable seamless software integration becomes critical when services must fail over without breaking downstream systems.

Pro tip: Design assuming your weakest node defines your reliability—not your strongest.

Principle 3: Navigating the Consistency-Availability Trade-off

If you’ve ever wondered why some apps feel instant while others feel cautious, you’re brushing up against the CAP Theorem. In plain terms, this foundational rule of distributed systems says a network can guarantee only two of three things: Consistency Availability, and Partition Tolerance. A network partition (when communication between nodes breaks) is inevitable in real-world systems—so architects must choose their trade-off wisely.

Here’s what that choice looks like in practice:

- Strong Consistency: Every user sees the exact same data at the same moment. Critical for banking transactions (you definitely don’t want your balance playing hide-and-seek).

- Eventual Consistency: Updates spread across the system over time. Social media likes are fine with slight delays—no one panics if a heart appears two seconds late.

Some argue strong consistency should always be the goal. After all, accuracy builds trust. True—but it often increases latency because systems must coordinate before confirming updates (think of it as waiting for everyone to raise their hand before moving on). According to Brewer’s original formulation of CAP (Brewer, 2000), these trade-offs are unavoidable in distributed environments.

That’s why your newly uploaded profile picture might not appear instantly for every friend. The system prioritizes availability and partition tolerance, letting updates sync in the background.

What’s next? If you’re designing or evaluating systems, ask: What failure can we tolerate most—delay, downtime, or stale data? Your answer shapes architecture, performance, and ultimately user trust.

Scalability, fault tolerance, and consistency models are not isolated engineering buzzwords; they are interlocking gears in the same machine. When one slips, the whole system grinds. In fact, Amazon famously reported that every 100 milliseconds of latency cost them 1% in sales (Amazon internal data, cited widely in performance studies). That’s scalability and consistency colliding with real revenue.

At the same time, Google’s early research on large-scale systems showed that hardware failures are not rare events but daily occurrences in massive clusters. In other words, failure isn’t an exception—it’s the baseline. That reality is why designing for redundancy and graceful degradation matters so much.

And yet, some argue modern cloud platforms “handle all that for you.” It’s true that providers abstract complexity. However, outages at major cloud vendors—like the 2021 Fastly disruption that briefly took down large portions of the internet—prove that resilient architecture still depends on sound distributed computing concepts applied thoughtfully.

So what’s the real challenge? Getting independent machines to behave like a single, reliable system—without sacrificing speed.

By embracing these principles together, engineers transform fragility into resilience. Next time your streaming app buffers or your payment clears instantly, pause and ask: what invisible trade-offs made that possible?

You came here looking for clarity on how today’s tech advancements, smart devices, and network innovations actually fit together—and now you have it. From emerging architectures to practical optimization strategies, you’ve seen how distributed computing concepts power scalability, resilience, and real-time performance across modern systems.

The reality is this: without a strong grasp of distributed computing concepts, networks become bottlenecks, devices underperform, and innovation stalls. That’s the pain point holding many teams back—not a lack of tools, but a lack of strategic integration.

Now it’s time to act. Audit your current infrastructure, identify weak points in scalability and latency, and start applying smarter optimization frameworks immediately. If you want faster systems, stronger networks, and future-ready architecture, don’t wait.

Build Smarter, Scale Faster

The next breakthrough won’t come from doing more—it’ll come from designing better. Leverage proven distributed computing concepts, implement advanced optimization hacks, and stay ahead of shifting tech demands. Thousands of forward-thinking innovators already rely on trusted innovation alerts and advanced network insights to stay competitive.

Take the next step now: upgrade your architecture strategy, refine your device ecosystem, and implement performance-driven optimizations today. Your infrastructure should accelerate growth—not limit it.