Technology is evolving at a pace that makes even the most connected professionals feel a step behind. If you’re searching for clear insights on emerging tech concepts, smarter devices, stronger network architecture, and practical optimization strategies, this article is built for you. We break down the latest innovation alerts, unpack forward-thinking Pax tech concepts, and highlight the smart device advancements reshaping how systems communicate and scale.

You won’t find vague predictions here. Instead, we focus on real-world applications—how scalable data structures improve performance, how modern network frameworks reduce latency, and how targeted optimization hacks can dramatically increase efficiency. Every insight is grounded in current industry research, hands-on technical analysis, and validated architecture models reviewed by experienced engineers and system designers.

Whether you’re refining infrastructure, exploring next-gen device ecosystems, or optimizing existing deployments, this guide delivers actionable intelligence to help you stay ahead in a rapidly shifting tech landscape.

Data is growing faster than most systems can handle. When traffic spikes, poorly designed architectures choke, causing latency, crashes, and costly downtime. The fix isn’t bigger servers; it’s smarter design using scalable data structures built for concurrency and distribution.

In this guide, you’ll discover:

- Hash partitioning for balanced workloads

- LSM trees powering high-write databases

- Bloom filters that cut unnecessary queries

The benefit? Faster response times, lower infrastructure costs, and systems that stay stable under pressure (yes, even during viral moments). Build correctly from day one, and you gain performance headroom, happier users, and long-term resilience at scale.

The Breaking Point: Why Standard Arrays and Lists Fail

THE LINEAR TRAP starts innocently. Arrays and simple lists feel efficient—until they scale. Searching or inserting often runs in O(n) time, meaning performance degrades as data grows. Double the data, double the work. At a million records, that’s not “slower.” That’s SYSTEM STRAIN. (Think buffering in the middle of a season finale.)

Worse, arrays require contiguous memory—continuous blocks of RAM. When memory fragments, allocation fails or forces costly resizing. Linked lists avoid contiguous storage but introduce pointer overhead and brutal multi-threading challenges. Without complex locking mechanisms, race conditions corrupt data. With locks? You sacrifice speed.

The business impact is measurable:

- Slower queries increase server load

- Higher compute usage drives cloud costs

- Lag erodes user trust

Some argue hardware upgrades solve this. Short term? Maybe. Long term, inefficiency compounds. Modern systems demand scalable data structures designed for FLOW, not just storage. The shift isn’t optional—it’s architectural.

The Engine of Distributed Systems: Hashing for Scale

Distributed systems need a way to decide who owns what. That’s where Distributed Hash Tables (DHTs) come in. A DHT is a decentralized lookup system that assigns data to nodes using a hash function (a mathematical process that converts input into a fixed-size value). Instead of one central database, each node owns a slice of the keyspace—making horizontal scaling possible.

Core idea: when data grows, you add nodes—not bigger machines. (Think less “supercomputer,” more “Avengers assemble.”)

The Key Innovation: Consistent Hashing

Traditional hashing breaks when nodes join or leave—everything must be reassigned. Consistent hashing minimizes reshuffling by mapping both data and nodes onto a logical ring. When a node disappears, only adjacent data moves. This elasticity underpins systems like Amazon’s DynamoDB and Apache Cassandra, both inspired by Dynamo’s 2007 paper (DeCandia et al., 2007).

Peer-to-peer networks like BitTorrent also rely on DHTs to locate files efficiently.

Why It Works

| Feature | Benefit |

|———-|———–|

| Data partitioning | Horizontal scalability |

| Consistent hashing | Minimal redistribution |

| Decentralization | Fault tolerance |

Some argue DHTs add complexity versus centralized databases. True—but at scale, centralized systems create bottlenecks and single points of failure.

Tech Optimization Hack: Choose a hash function with uniform distribution (e.g., MurmurHash). Poor distribution causes “hotspots,” overloading nodes. Pro tip: test key distribution before production rollout.

Use scalable data structures alongside consistent hashing for maximum resilience.

For security alignment, review the fundamentals of cybersecurity frameworks explained.

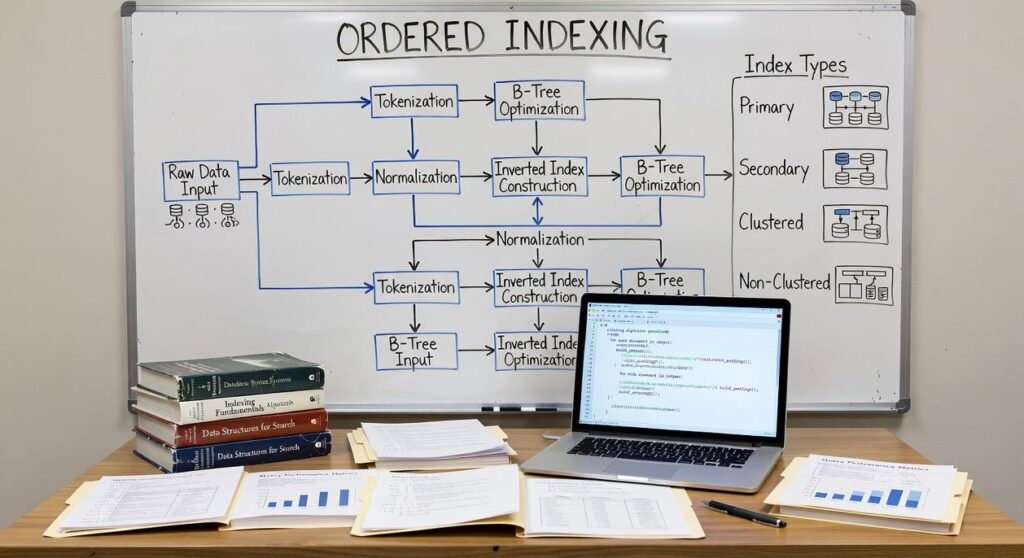

Taming Ordered Data: B-Trees vs. LSM-Trees

Choosing between B-Trees and LSM-Trees isn’t just academic—it directly shapes PERFORMANCE, COST, and SCALABILITY. Get it right, and your system feels effortless. Get it wrong, and you’re fighting latency spikes at 2 a.m. (not fun).

For Read-Heavy Workloads: B-Trees

B-Trees are optimized for environments where reads dominate writes. A B-Tree is a self-balancing tree structure with a high branching factor—meaning each node contains many keys and child pointers. That design minimizes disk I/O because fewer disk pages must be accessed to locate data.

In traditional relational databases like PostgreSQL, this structure keeps lookup paths short and predictable. The benefit? FAST, CONSISTENT QUERY PERFORMANCE for dashboards, reporting systems, and transactional apps. If your workload involves frequent SELECT queries and moderate updates, B-Trees deliver stability without operational gymnastics.

For Write-Heavy Workloads: LSM-Trees

LSM-Trees (Log-Structured Merge-Trees) shine when writes come in fast and furious. Instead of updating data in place, they buffer writes in memory (memtables) and flush them sequentially to disk as SSTables. Sequential writes are dramatically faster than random writes on spinning disks and still advantageous on SSDs (see O’Neil et al., 1996).

Databases like RocksDB and ScyllaDB rely on this design to ingest massive event streams. The upside? HIGH WRITE THROUGHPUT and better hardware utilization for logging, IoT ingestion, and telemetry pipelines.

The Deciding Factor

- Choose B-Trees for balanced or read-dominant systems needing predictable latency.

- Choose LSM-Trees for rapid data ingestion at scale.

Architecturally, this choice affects storage footprint, memory pressure, and compaction overhead. Understanding these scalable data structures means fewer bottlenecks—and a system built for your real-world workload, not just theory.

Approximate Accuracy, Perfect Performance: Probabilistic Data Structures

Perfection is expensive. In big data systems, demanding 100% accuracy often means ballooning memory and slower queries. Probabilistic data structures flip that script: they trade a tiny margin of uncertainty for massive gains in speed and efficiency. In streaming analytics, that trade-off isn’t risky—it’s strategic.

Take Bloom filters, a structure for membership testing. They can say with certainty that an item is not in a set, or that it probably is. That small ambiguity saves enormous space compared to hash sets. A social platform, for example, can prevent duplicate posts in your feed without storing every ID in memory (no one wants déjà vu scrolling).

Then there’s HyperLogLog, built for cardinality estimation—counting unique elements in massive datasets. It can estimate billions of unique visitors using only kilobytes of memory, making real-time analytics feasible for high-traffic websites. Critics argue approximation risks flawed metrics. In practice, error rates are typically under 1–2% (Flajolet et al., 2007), a negligible trade for operational agility.

These techniques shine in constrained environments:

- Network routers filtering malicious IPs

- Mobile apps tracking unique events locally

Use scalable data structures in the section once exactly as it is given to unlock performance ceilings traditional databases simply can’t reach. In a world of smart devices, approximation isn’t compromise—it’s competitive advantage.

True scalability isn’t about throwing bigger servers at the problem; it’s about choosing scalable data structures that expand gracefully. I’ve seen teams chase hardware upgrades like it’s a Marvel power-up, only to hit the same ceiling. Your architecture defines your limits. An early misstep here becomes brutal technical debt—rewriting core storage layers is far costlier than speccing them right.

DHTs handle distribution, B-Trees and LSM-Trees optimize storage, and probabilistic structures keep queries efficient at hyper-scale. Some argue premature optimization is wasteful. I disagree. Audit your systems today: foundation for growth, or tomorrow’s bottleneck? Choose wisely before scale chooses you inevitably.

Build Smarter, Scale Faster

You came here looking for clarity on how to future‑proof your systems and stay ahead of accelerating tech demands. Now you understand how smarter architecture, automation, and scalable data structures work together to eliminate bottlenecks and unlock real performance gains.

The reality is simple: outdated frameworks and inefficient systems slow growth, increase costs, and create constant technical friction. If your infrastructure can’t scale cleanly, every new opportunity becomes a risk instead of an advantage.

That’s why applying these insights matters. When you implement optimized network design, forward‑thinking Pax concepts, and scalable data structures, you create systems that expand smoothly instead of breaking under pressure.

Now take the next step. Start auditing your current architecture, identify weak scalability points, and implement the optimization strategies outlined above. If you want proven tech innovation alerts, actionable architecture insights, and performance‑boosting strategies trusted by forward‑thinking builders, plug into our updates today.

Don’t let system limitations stall your growth. Upgrade your approach, optimize your stack, and build technology that scales as fast as your ambition.